The Great Inversion

How AI Is Automating White-Collar Work First

and Reshaping the Global Workforce

Abstract

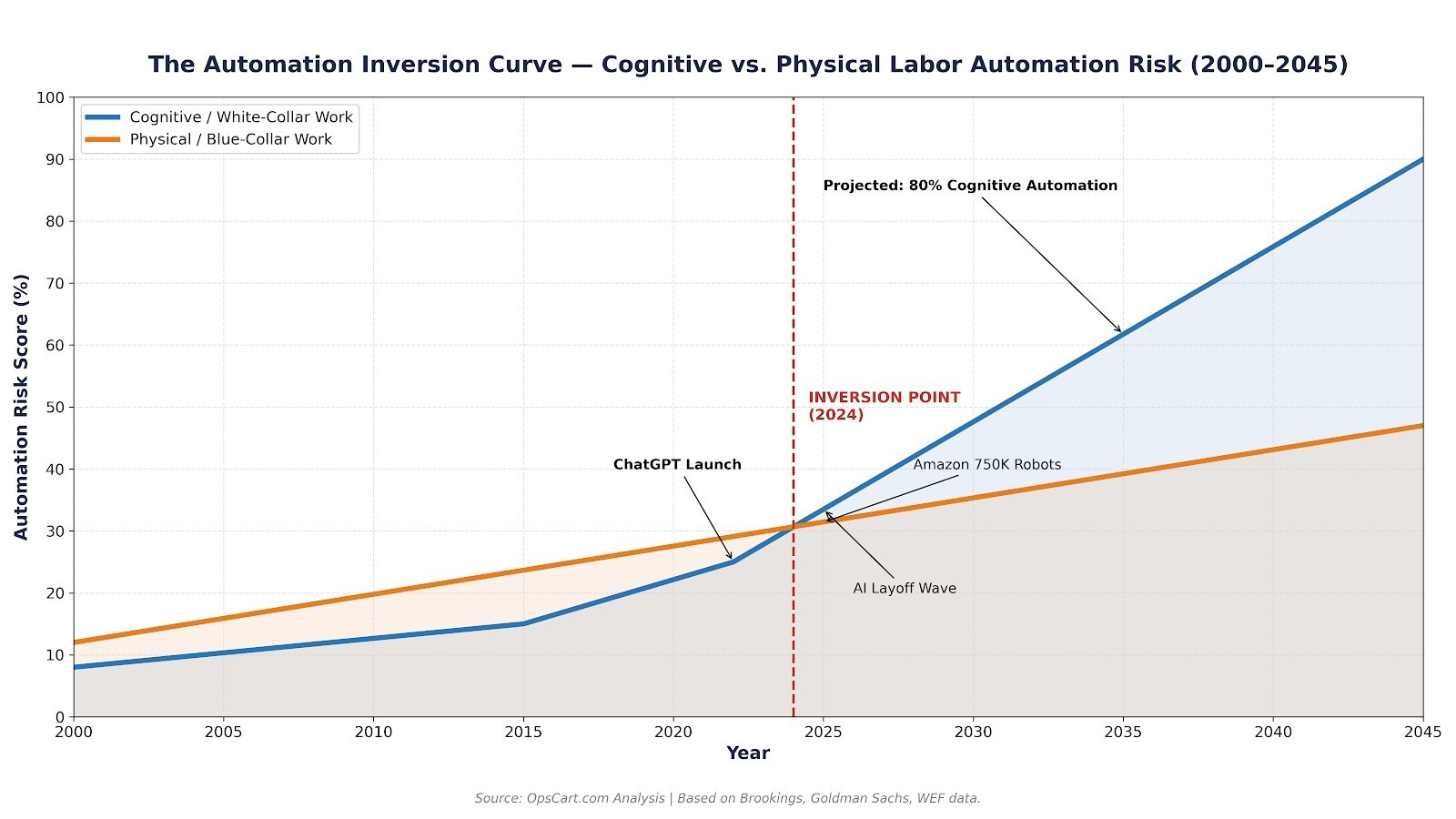

For decades, the prevailing assumption held that automation would consume blue-collar work first—factory floors, warehouses, manual labor—before touching the cognitive professions. That assumption is now inverted.

Generative AI, large language models, and agentic software systems are eliminating routine cognitive “screen work” at a pace that robotics cannot match in the physical domain. The Brookings Institution confirmed this paradigm shift: generative AI excels at the cognitive, nonroutine tasks of better-educated, better-paid office workers, while physical, routine blue-collar work remains largely unaffected barring robotics breakthroughs. Goldman Sachs estimates 300 million full-time roles globally face disruption. The World Economic Forum’s Future of Jobs Report 2025 projects 92 million jobs displaced by 2030, with 170 million created—but the transition demands a fundamental reimagining of who works, what they do, and how skills compound rather than decay.

This article provides a structured, data-driven analysis of this historic inversion for practicing engineers and IT professionals. If you’ve ever pushed a Helm chart to production at 2 AM, debugged a flaky pipeline, or triaged a PagerDuty alert—this is written for you.

Executive Synthesis: The Inversion in 6 Statements

- AI automates cognitive screen work faster than physical labor. Generative AI targets tasks performed through keyboards and monitors—the native medium of large language models. Robotics still struggles with physical dexterity (Brookings, 2024–2025).

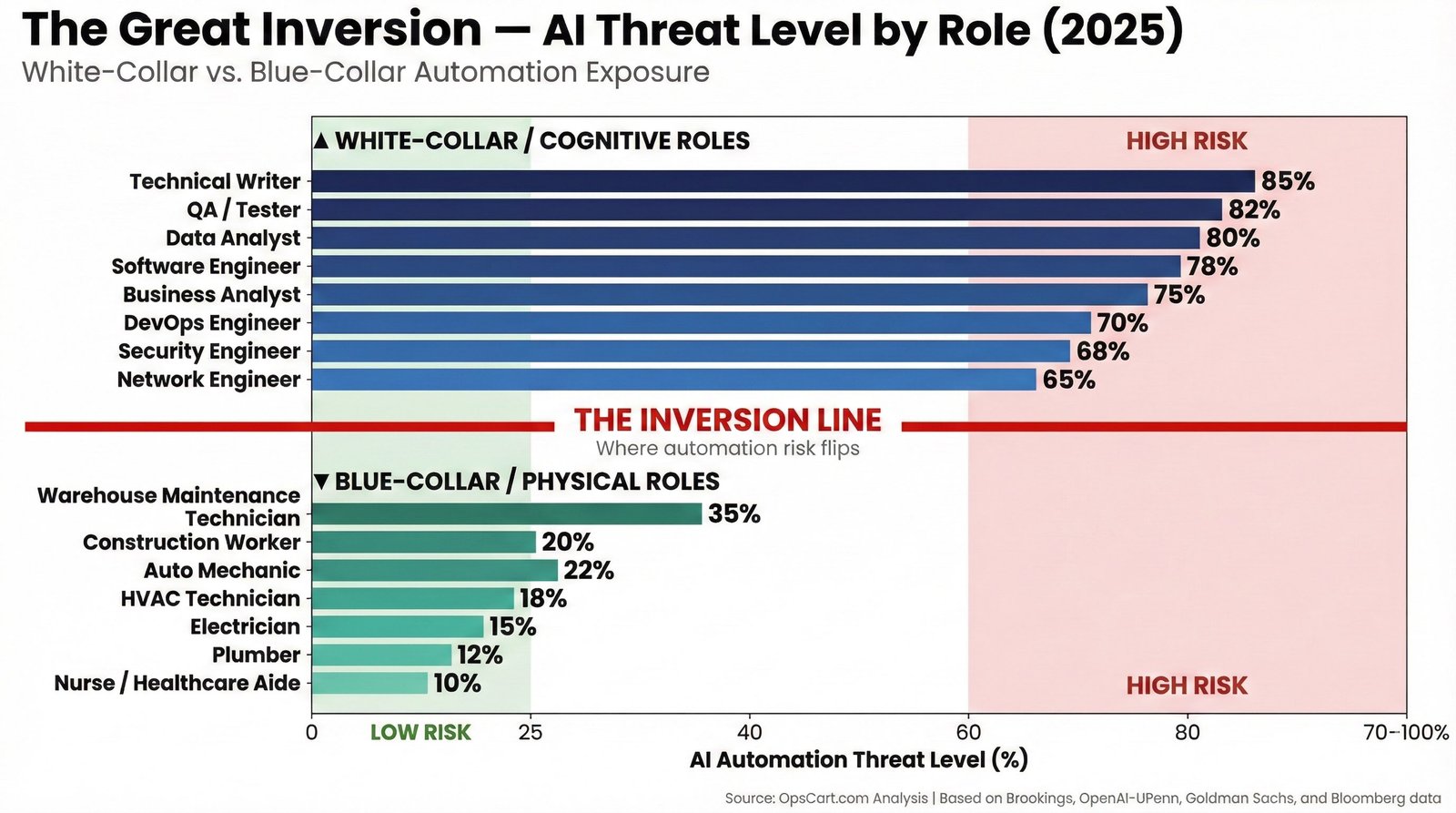

- White-collar task exposure (65–85%) now exceeds blue-collar exposure (10–35%). This reverses a century of industrial automation assumptions (OpenAI/UPenn, Goldman Sachs, Bloomberg).

- Entry-level cognitive roles are the first casualties. Stanford’s Digital Economy Lab found a 13% decline in entry-level hiring for AI-exposed occupations since LLMs proliferated.

- Automation does not remove work—it concentrates it into fewer, higher-leverage roles. Headcount compresses. The work per remaining employee intensifies. (See: Salesforce 9K → 5K support.)

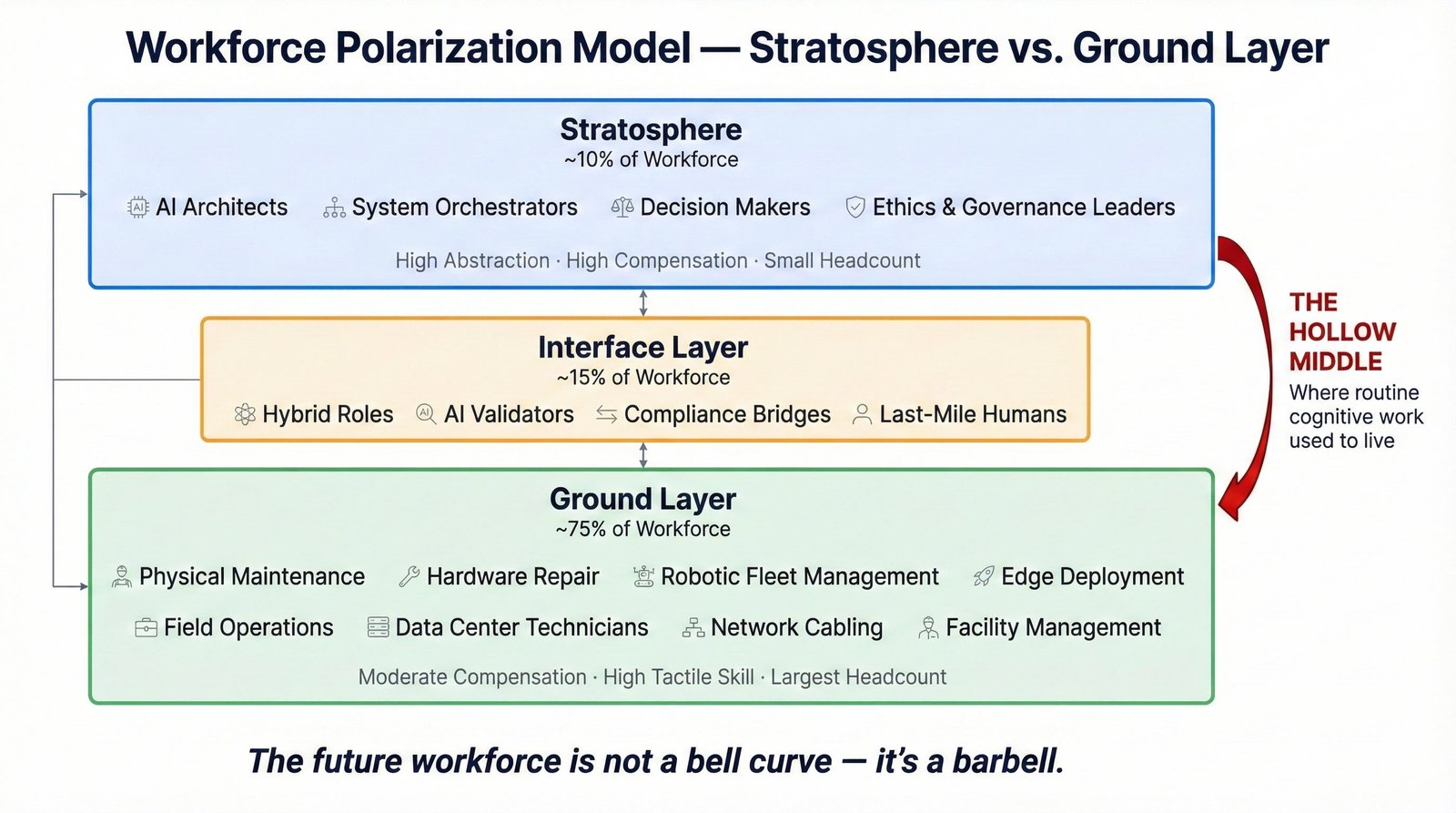

- The middle of the workforce hollows out; a barbell replaces the bell curve. Strategic Orchestration (top) and Physical Maintenance (bottom) persist. Routine cognitive work (middle) evaporates.

- Careers survive by moving up (orchestration) or out (physical systems). Standing still—performing routine screen work—is standing on a melting glacier.

Figure 1: The Workforce Barbell. The middle layer—routine cognitive work—hollows out. What remains is the Stratosphere (strategic AI governance) and the Ground Layer (physical maintenance). The future workforce is not a bell curve.

Key Findings

Throughout this article, high automation percentages refer to task content—not complete job elimination. When we say “AI handles ~80% of work in a role,” we mean approximately 80% of the discrete tasks that compose that role are amenable to AI execution based on current empirical task-exposure models (Bloomberg 2025, OpenAI/UPenn 2023, OECD task frameworks). A role can persist even when most of its tasks are automated—but its headcount compresses, its seniority requirements increase, and its job description transforms. 80% task automation ≠ 80% job loss. The reality is subtler and, in many ways, more consequential.

Figure 2: AI automation threat levels across white-collar and blue-collar roles. Exposure estimates derived from Bloomberg task analysis (2025), OpenAI/UPenn occupation exposure scores (2023), and OECD task framework models. The inversion is clear: cognitive roles face 65–85% task exposure while physical roles sit at 10–35%.

1. Introduction: The Inversion Thesis

For a generation, the automation narrative followed a predictable script: robots take the factory floor, algorithms consume the call center, and eventually—eventually—software comes for the corner office. The timeline was assumed to be linear and bottom-up. Physical labor first, cognitive labor last.

That script has been torn up.

What we’re witnessing is not incremental automation. It’s a structural inversion. AI is automating white-collar cognitive work—the tasks performed on screens, through keyboards, across spreadsheets and codebases—faster and more completely than it is automating physical labor. Here’s the blunt version: a plumber’s job is safer from AI than a junior financial analyst’s. A warehouse maintenance technician has more job security than a mid-level QA engineer running regression test suites.

The Brookings Institution captured this precisely in their 2024–2025 generative AI research: the technology excels at mimicking nonroutine skills that experts considered impossible for computers just a few years ago—including programming, prediction, writing, creativity, and analysis. The industries facing greatest exposure today were ranked among the least exposed to previous forms of automation.

If you’re an engineer reading this and thinking “but I build systems, not spreadsheets”—keep reading. Building systems is exactly the kind of cognitive screen work that falls within AI’s strike zone.

What We Thought Would Happen vs. What Actually Happened

| Assumption (Pre-2023) | Reality (2025–2026) |

|---|---|

| Code would be among the hardest to automate | Code generation was among the first capabilities demonstrated by LLMs |

| Physical trades would disappear first | Physical trades show the lowest AI exposure (10–35%) |

| AI would replace clerical work only | AI replaces analysts, developers, QA engineers, writers |

| Entry-level jobs would be the safest | Entry-level hiring in AI-exposed roles dropped 13% (Stanford) |

| Automation would be gradual over decades | ChatGPT launched Nov 2022; 54,694 AI layoffs by 2025 |

| Robotics would lead; software would follow | Software leads; robotics still requires human safety monitors |

Figure 3: The Automation Inversion Curve. The crossover point where cognitive labor risk surpassed physical labor risk occurred around 2023–2024, driven by generative AI breakthroughs. Based on Brookings Institution, Goldman Sachs, and WEF data.

2. Why Cognitive Work Fell First

The Technical Explanation

The answer is architectural, not accidental. Large language models are pattern-completion engines trained on the internet’s corpus of human cognitive output: code, documents, analyses, emails, reports, spreadsheets. Every task that can be decomposed into read input → apply pattern → generate output is within LLM capability today. That describes the majority of what knowledge workers do between 9 AM and 5 PM.

Task Decomposition and the Screen Work Problem

Most white-collar work is “screen work”—tasks performed entirely through digital interfaces. When you analyze a codebase, draft a legal brief, build a financial model, or configure a CI/CD pipeline, every input and output is digital. This is precisely the domain where AI excels because the entire workflow exists within the machine’s native medium.

Compare this to physical labor. A robot that needs to unclog a drain must: perceive the physical environment through imperfect sensors, plan motor actions that are computationally expensive, execute them with fine-grained dexterity that remains mechanically challenging, and adapt in real time to unexpected conditions. This is Moravec’s Paradox in action: tasks trivial for a five-year-old remain frontier challenges in robotics.

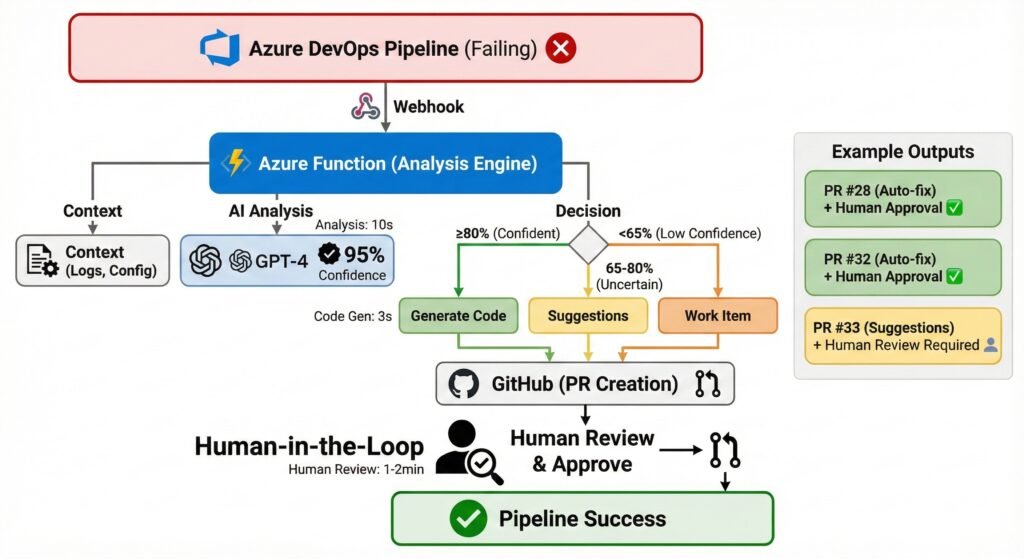

Agent Chaining: Your CI/CD Pipeline, But for Thinking

Modern AI systems don’t operate as single-shot tools. They decompose complex tasks into subtasks, execute them sequentially or in parallel, verify outputs, and iterate. Sound familiar? It’s the exact same pattern that made CI/CD revolutionary for software delivery—but applied to cognitive work itself.

# Think of AI agent chains as cognitive pipelines:

#

# Traditional CI/CD:

# source → build → test → stage → deploy → monitor

#

# Cognitive AI Pipeline:

# ticket_intake → code_analysis → fix_generation → test_writing →

# pr_creation → review_response → release_notes → monitoring_rules

#

# Same architecture. Same retry logic. Same observability patterns.

# Different payload: instead of deploying artifacts, you're deploying decisions.The irony is sharp: DevOps principles built for deploying software are now being used to deploy the replacement for the people who built those pipelines.

3. The White-Collar Displacement Wave

Data & Case Studies

The Numbers

Goldman Sachs projects 300 million full-time roles globally face AI disruption (Goldman Sachs AI Economic Impact Report, 2023–2025). Their 2025 analysis found unemployment among 20- to 30-year-olds in tech-exposed occupations rose by nearly 3 percentage points since early 2024. Estimated 6–7% of all U.S. workers could lose their jobs due to AI adoption.

WEF 2025 Drawing on 1,000+ companies representing 14 million workers across 55 economies: 92 million jobs displaced by 2030; 170 million new roles created (net +78M); 41% of employers plan to reduce workforce where AI automates tasks. (World Economic Forum, Future of Jobs Report, January 2025.)

Challenger, Gray & Christmas Tracked 54,694 AI-attributed U.S. layoffs in 2025 alone. (Challenger, Gray & Christmas AI-Linked Layoffs Tracker, 2025.)

Stanford Digital Economy Lab Entry-level hiring in “AI-exposed jobs” dropped 13% since LLMs proliferated. Software development, customer service, and clerical work hit hardest. (Stanford Digital Economy Lab / ADP Employment Analysis, 2025.)

Enterprise Case Studies

Salesforce: “I Need Less Heads”

Marc Benioff revealed in September 2025 that AI agents enabled a reduction of Salesforce’s support team from 9,000 to approximately 5,000 positions. The Agentforce platform now handles 50% of customer conversations with zero AI involvement just one year prior. On the Logan Bartlett Show podcast: “I’ve reduced it from 9,000 heads to about 5,000, because I need less heads.” This happened weeks after he told the AI for Good Global Summit that AI wouldn’t cause mass white-collar layoffs.

Amazon: 750,000 Robots and Counting

Internal documents obtained by The New York Times in October 2025 revealed Amazon’s plan to automate 75% of its operations, potentially eliminating the need for 600,000 future hires by 2033. Over 750,000 robots deployed—nearly matching its 1.5 million workforce. But here’s the part that matters: Amazon also cut 14,000 corporate (white-collar) jobs in 2025. CEO Andy Jassy stated AI means “fewer people” for certain roles.

Microsoft: 15,000 Roles in One Year

Approximately 15,000 roles eliminated in 2025, with 9,000 in a single July round. Nadella framed it as restructuring toward AI services.

The Broader Pattern

IBM: hundreds of back-office roles replaced. CrowdStrike: 500 positions citing AI efficiency. Workday: 1,750 jobs. Paycom: 500+ after automating payroll. Layoffs.fyi tracked 152,000 tech layoffs in 2024 followed by 123,000 in 2025.

Why 80% Task Automation ≠ 80% Job Loss (But Still Hurts)

Critics will challenge the “80% of tasks” framing—and they should. Precision matters. Here’s the distinction:

Task automation means AI can perform approximately 75–85% of the discrete activities within a role. This is measured by task-exposure models from Bloomberg (2025), OpenAI/UPenn (2023), and OECD occupation frameworks. These models decompose roles into component tasks and assess each task’s AI amenability.

Role elimination is a different, smaller number. Even when AI handles most tasks, organizations still need humans for judgment, exceptions, compliance, stakeholder management, and physical presence. What changes is headcount and seniority distribution.

The real impact is threefold:

Headcount compression: A team of 10 engineers becomes a team of 4 doing the same output. Salesforce demonstrated this at scale: 9,000 support roles compressed to 5,000 (a 44% headcount reduction, not a 50% task-automation figure).

Span-of-control expansion: Each remaining engineer manages AI agents that replaced their former colleagues. More responsibility, same salary bracket, exponentially higher cognitive load.

Junior-role collapse: If AI handles 80% of entry-level tasks, organizations skip the entry-level hire entirely. Stanford’s 13% hiring decline in AI-exposed roles reflects this. The pipeline for growing senior talent constricts.

4. The Robot Paradox: Why Physical Jobs Persist

Amazon’s Vulcan robot, unveiled in May 2025, represents the state of the art: touch sensitivity, pressure calibration, AI-powered learning from failures. But Amazon’s own director of applied science acknowledged he doesn’t believe in 100% automation. Vulcan alerts humans when it encounters tasks beyond its capabilities.

Tesla launched its robotaxi in Austin on June 22, 2025—with human safety monitors in the passenger seat. Despite Musk’s 2019 prediction of a million robotaxis by 2020, the December 2025 fleet numbered approximately 135 vehicles. NHTSA opened an investigation one day after launch.

This is the physical-world bottleneck: even with billions invested, autonomous navigation progresses incrementally. AI’s conquest of screen work accelerates exponentially because the digital domain has none of the physical world’s unpredictability.

5. The 80/20 Rule and the Age of Amplification

If you’ve managed production systems, you already think in terms of automation ratios. What percentage of your deployments are zero-touch? What percentage of alerts auto-remediate? Now apply that thinking to your own job.

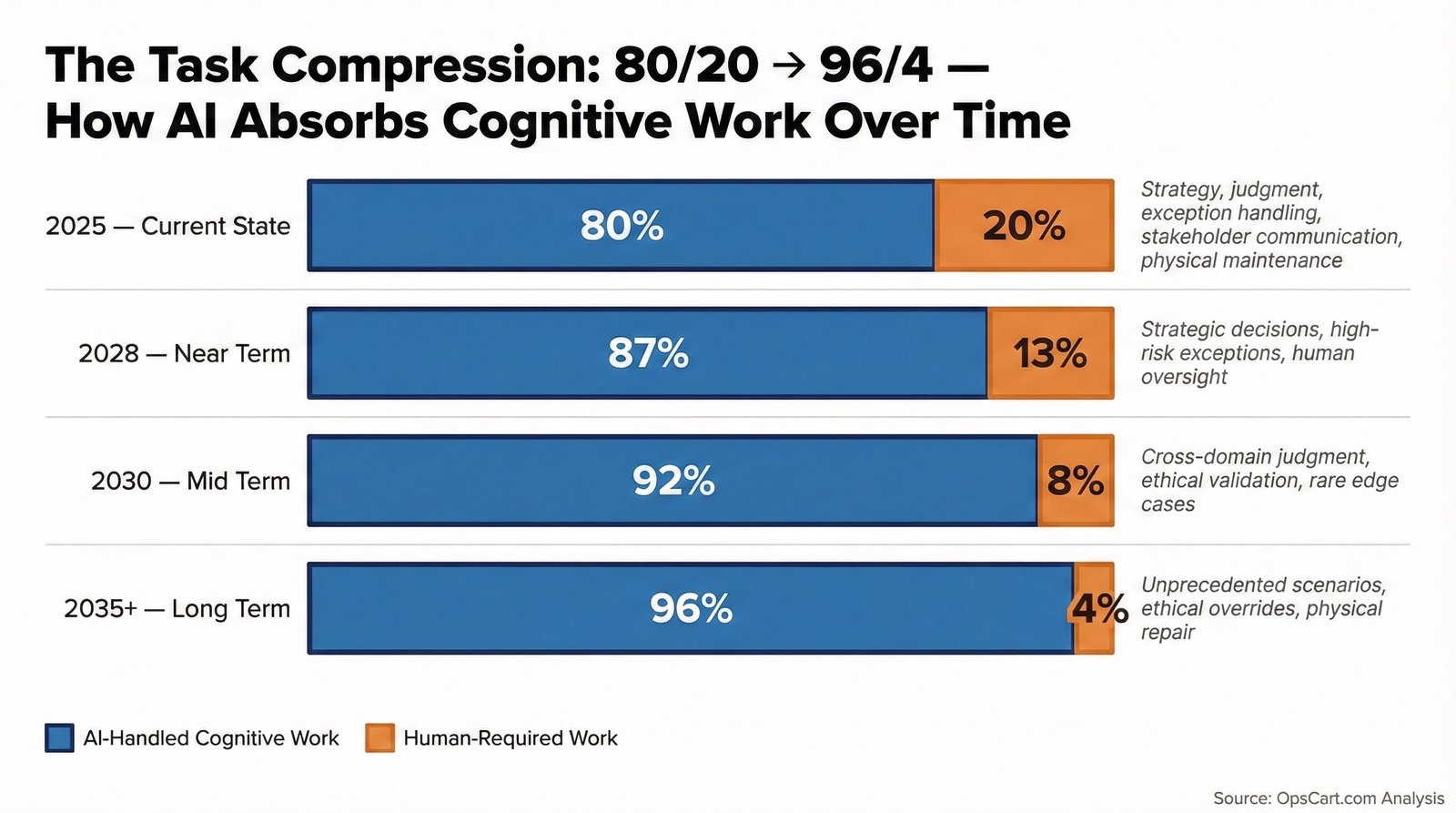

Figure 4: The task compression trajectory. Measured task-exposure levels currently range from 65–85% for cognitive roles (Bloomberg 2025, OpenAI/UPenn 2023). Model projections suggest further compression to 90/10 by 2030 and 95+/5 by 2035 (WEF, McKinsey). The human slice shrinks but its value per unit skyrockets.

Today, across many knowledge-work domains, AI-amenable task exposure is estimated at ~75–85% for cognitive roles in current empirical models (Bloomberg Research, 2025). Humans provide the remaining 15–25%: strategy, judgment, exception handling, stakeholder communication. This ratio will compress to 90/10, then 95/5. At each stage, the human’s contribution becomes exponentially more valuable—and exponentially more demanding.

Role-by-Role: What AI Handles vs. What Stays Human

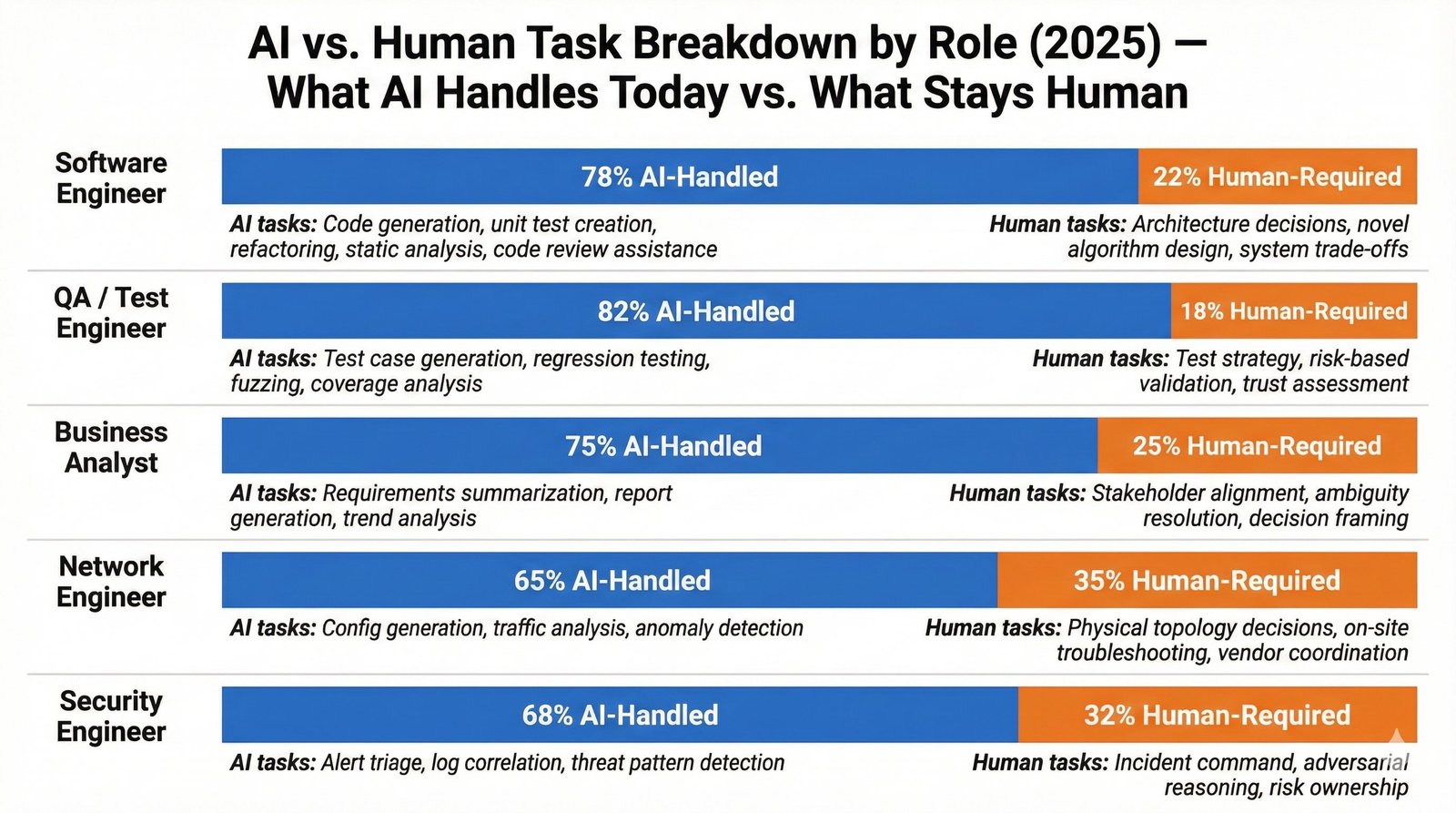

The DevOps task breakdown is one slice. Let’s map every major IT role so you can find yourself in the data. (As shown in Figure 5 below.)

Figure 5: Task automation exposure across five key IT roles. Exposure estimates derived from Bloomberg task analysis (2025), OpenAI/UPenn occupation exposure models (2023), and enterprise adoption patterns. Note how physical-adjacent roles (Network, Security) retain more human-required work than pure cognitive roles (QA, Business Analyst).

💻 Software Engineer — ~78% Task Exposure / ~22% Human-Required

# Software Engineer: Weekly Task Breakdown (2025 Reality)

#

# AI-AMENABLE TASKS (~78% of task content):

# - Code generation from specs, tickets, user stories → AI-generated

# - Unit & integration test writing → AI-generated

# - Code review for patterns, bugs, style violations → AI-scanned

# - Refactoring & optimization suggestions → AI-analyzed

# - API integration boilerplate & SDK wrappers → AI-generated

# - Documentation, README files, inline comments → AI-drafted

# - Dependency updates & migration scripts → AI-handled

# - PR descriptions & commit messages → AI-written

#

# HUMAN-REQUIRED TASKS (~22% of task content):

# - Architecture decisions: "monolith vs. microservice for THIS org's

# team size, hiring timeline, and compliance requirements"

# - Novel algorithm design for domain-specific edge cases

# - Cross-team negotiation: "your API contract breaks our SLA"

# - Production incident judgment when 3 services fail simultaneously

# - Technical debt prioritization: what to fix NOW vs. what to live with

# - Mentoring junior developers (while that role still exists)Career signal: The engineer who writes code is being replaced. The engineer who decides what code should exist, why, and at what trade-off is becoming more valuable. Architecture > Implementation.

🧪 QA / Test Engineer — ~82% Task Exposure / ~18% Human-Required

# QA / Test Engineer: Weekly Task Breakdown (2025 Reality)

#

# AI-AMENABLE TASKS (~82% of task content):

# - Test case generation from requirements/user stories → AI-generated

# - Regression test suite execution & reporting → AI-orchestrated

# - Bug report drafting with reproduction steps → AI-written

# - Test data generation (synthetic datasets, edge cases) → AI-produced

# - Code coverage analysis & gap identification → AI-analyzed

# - Performance/load test scripting (k6, JMeter configs) → AI-generated

# - API contract testing & schema validation → AI-automated

# - Visual regression testing → AI-compared

#

# HUMAN-REQUIRED TASKS (~18% of task content):

# - Exploratory testing: "something FEELS wrong about this flow"

# - UX judgment calls that require empathy & user understanding

# - Edge case identification from domain expertise (pharma, finance)

# - Risk-based test strategy: "what breaks the business, not just the code"

# - Compliance validation against regulatory frameworks (GxP, SOC2)

# - Communicating quality trade-offs to stakeholders under time pressureCareer signal: QA is among the most exposed roles because testing is fundamentally pattern-matching. The survivors will be those who bring domain expertise and risk judgment that AI lacks. “Does this meet FDA submission requirements?” is a question AI can assist with but cannot own.

📊 Business Analyst — ~75% Task Exposure / ~25% Human-Required

# Business Analyst: Weekly Task Breakdown (2025 Reality)

#

# AI-AMENABLE TASKS (~75% of task content):

# - Requirements documentation from meeting transcripts → AI-drafted

# - Data gathering, aggregation & summarization → AI-processed

# - Report generation (status, KPI, dashboards) → AI-generated

# - Market research compilation & competitive analysis → AI-researched

# - Meeting notes, action items & follow-up emails → AI-transcribed

# - Process mapping & workflow documentation → AI-diagrammed

# - User story writing from high-level requirements → AI-drafted

# - Impact analysis for proposed changes → AI-modeled

#

# HUMAN-REQUIRED TASKS (~25% of task content):

# - Stakeholder relationship management & trust-building

# - Political navigation: "finance wants X, engineering wants Y,

# the CEO wants Z — here's what we actually do"

# - Ambiguity resolution: translating vague exec vision into specs

# - Strategic prioritization when everything is "top priority"

# - Change management: getting humans to adopt new processes

# - Reading the room in workshops, interviews, discovery sessionsCareer signal: The BA who collects and documents requirements is automating away. The BA who navigates organizational politics, resolves ambiguity, and drives adoption becomes more critical. The job shifts from “what do you need?” to “here’s what you actually need, and here’s how we get buy-in.”

🌐 Network Engineer — ~65% Task Exposure / ~35% Human-Required

# Network Engineer: Weekly Task Breakdown (2025 Reality)

#

# AI-AMENABLE TASKS (~65% of task content):

# - Config generation (ACLs, routing tables, VLAN setups) → AI-generated

# - Monitoring, alerting & automated triage → AI-processed

# - Network topology documentation & diagrams → AI-mapped

# - Capacity planning models & forecasting → AI-modeled

# - Compliance audit reports (PCI-DSS, HIPAA network) → AI-drafted

# - Firmware update planning & compatibility checks → AI-analyzed

# - DNS/DHCP/IPAM management for routine changes → AI-handled

#

# HUMAN-REQUIRED TASKS (~35% of task content):

# - Physical cabling, rack work, patch panel management ← HANDS-ON

# - On-site troubleshooting: "is it the SFP or the cable?"← PHYSICAL

# - Data center walkthrough & environmental assessment ← PHYSICAL

# - Vendor negotiations & contract management

# - Disaster recovery execution (not planning — DOING it)

# - Debugging intermittent issues that span physical + logical layers

# - Emergency response when the fiber gets cut at 2 AM ← PHYSICALCareer signal: Network engineering is the most physically-protected IT role. The 35% human requirement is dominated by tasks that require being physically present with tools in hand. The engineer who can configure an AI-managed SD-WAN and trace a fiber path through a building has a career that compounds. This is the Ground Layer advantage in action.

🛡 Security Engineer — ~68% Task Exposure / ~32% Human-Required

# Security Engineer: Weekly Task Breakdown (2025 Reality)

#

# AI-AMENABLE TASKS (~68% of task content):

# - Vulnerability scanning, prioritization & triage → AI-processed

# - Log correlation & anomaly detection (SIEM analysis) → AI-detected

# - Security policy document generation & updates → AI-drafted

# - Threat intelligence aggregation & reporting → AI-compiled

# - Compliance report drafting (SOC2, ISO 27001, NIST) → AI-generated

# - Phishing email analysis & classification → AI-classified

# - Patch management prioritization → AI-ranked

# - Access review & IAM audit reports → AI-analyzed

#

# HUMAN-REQUIRED TASKS (~32% of task content):

# - Threat modeling: "how would an adversary attack THIS specific system?"

# - Incident response leadership during active breaches

# - Red team strategy & creative adversarial thinking

# - Zero-day judgment: "is this a real threat or noise?"

# - Regulatory interpretation: "does this architecture satisfy GDPR?"

# - Security architecture review for novel system designs

# - Social engineering assessment (human behavior, not code)

# - Executive communication during security incidentsCareer signal: Security has a built-in moat: adversarial thinking. AI is excellent at pattern detection but weak at creative attack simulation. The security engineer who thinks like an attacker, makes judgment calls under pressure, and translates risk into business language has compounding career value. The one who only runs scan-and-report workflows is automating away.

⚙ DevOps Engineer — ~70% Task Exposure / ~30% Human-Required

# DevOps Engineer: Weekly Task Breakdown (2025 Reality)

#

# AI-AMENABLE TASKS (~70% of task content):

# - Writing Terraform/Helm/Pulumi configs from requirements → AI-generated

# - Pipeline YAML (GitHub Actions, Azure DevOps, Jenkins) → AI-generated

# - Reviewing PR diffs for security & best practices → AI-scanned

# - Drafting runbooks and incident postmortems → AI-drafted

# - Log analysis, anomaly detection & alert correlation → AI-processed

# - Cost optimization analysis & rightsizing reports → AI-analyzed

# - Documentation updates & architecture diagrams → AI-maintained

# - Container image scanning & CVE triage → AI-classified

#

# HUMAN-REQUIRED TASKS (~30% of task content):

# - Architectural decisions: "EKS vs AKS for THIS org's compliance needs"

# - Incident response when 3 systems cascade-fail at 3 AM

# - Explaining to the CTO why the migration takes 6 months, not 6 weeks

# - Physical: racking hardware, debugging network cabling, DC walkthrough

# - Compliance judgment: "does this meet pharma GxP requirements?"

# - Toil identification: knowing which automation is WORTH building

# - Cross-team platform adoption: getting developers to use your toolsCareer signal: DevOps sits at the intersection of cognitive and physical. The engineer managing AKS clusters who also understands the physical infrastructure underneath has a dual-layer career advantage. The one who only writes pipeline YAML is in the 70% that AI absorbs.

How Exposed Is Your Role? A Self-Diagnostic

Before reading further, run your own role through this five-dimension assessment. Score honestly.

| Dimension | Low Exposure (1–2) | High Exposure (4–5) | Your Score |

|---|---|---|---|

| % of work done entirely on a screen | <40% screen time; hands-on daily | >90% screen time; keyboard-only | ___/5 |

| % of output that is review vs. creation | You create novel designs & strategy | You review, edit, approve repeatable work | ___/5 |

| % of tasks that repeat weekly | <20% of tasks are the same each week | >70% of tasks repeat weekly | ___/5 |

| % of value from judgment under uncertainty | Your value = making hard calls no one else can | Your value = speed and consistency | ___/5 |

| Physical presence required? | Yes — hardware, labs, DC, on-site daily | No — fully remote, purely digital | ___/5 |

13–19 = Moderate Exposure. Parts of your role are defensible (judgment, physical, novel creation), but the routine portions are automating away. Shift your time allocation toward the human-required slice.

5–12 = Lower Exposure. Your role involves significant physical presence, novel problem-solving, or adversarial thinking. Focus on deepening those differentiators rather than learning to use AI tools for the parts AI already handles.

6. Evolution of IT Job Titles: 5 / 10 / 20 Year Forecast

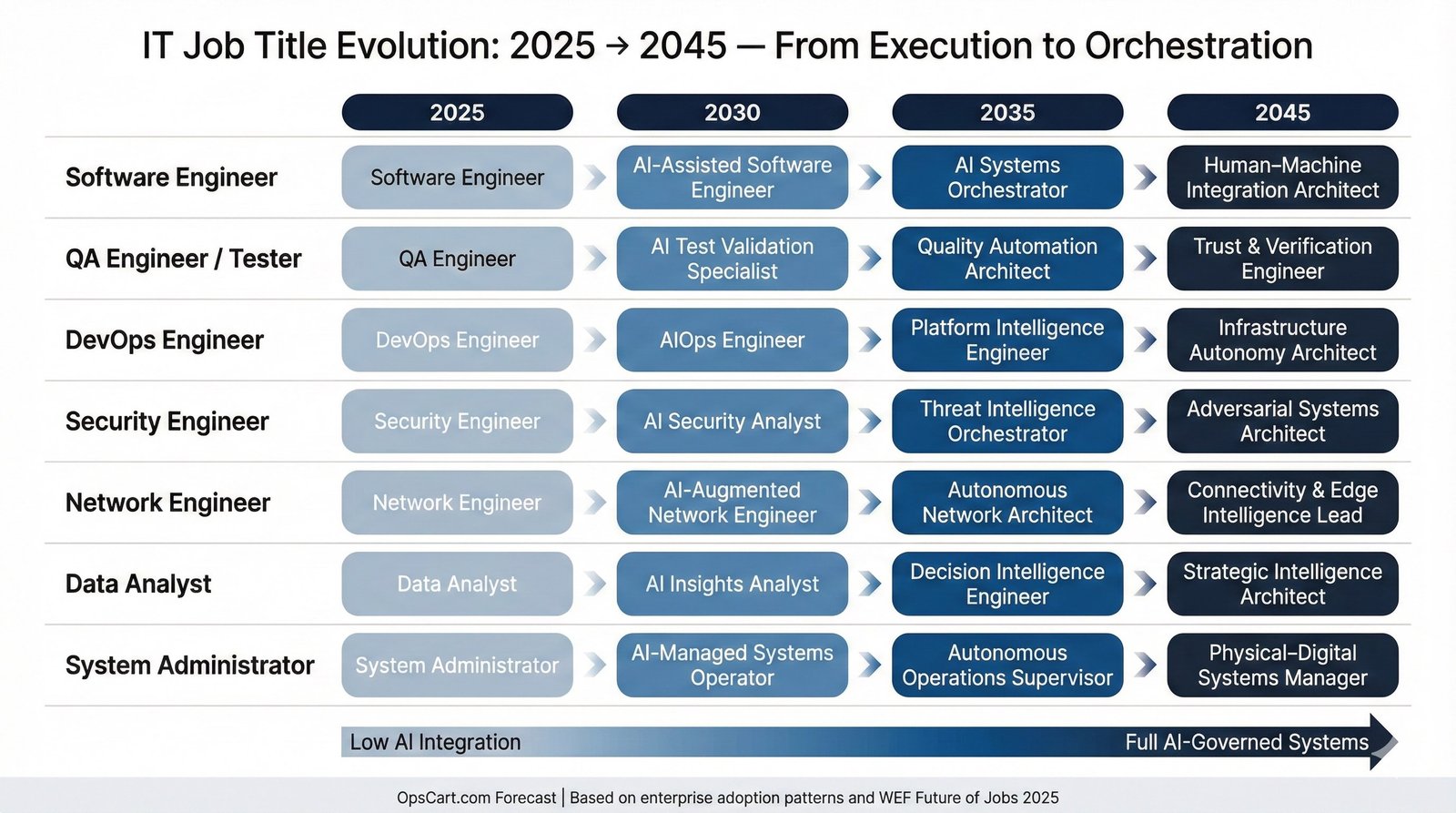

Job titles are lagging indicators. The work has already shifted; the LinkedIn profiles will catch up. (As shown in Figure 6 below.)

Figure 6: IT Job Title Evolution Timeline. Color intensity deepens as roles become more AI-governed. The pattern is consistent: Execution → Orchestration → Architecture → Autonomy Governance. Based on enterprise adoption patterns and WEF Future of Jobs 2025 projections.

| Current Title (2025) | 5-Year (2030) | 10-Year (2035) | 20-Year (2045) |

|---|---|---|---|

| Software Engineer | AI-Assisted Software Engineer | AI Systems Orchestrator | Human-Machine Integration Architect |

| Software Developer | Prompt-Augmented Developer | Solution Synthesis Engineer | Autonomous Systems Designer |

| QA Engineer / Tester | AI Test Validation Specialist | Quality Automation Architect | Trust & Verification Engineer |

| DevOps Engineer | AI-Ops Engineer | Platform Intelligence Engineer | Infrastructure Autonomy Architect |

| Platform Engineer | AI Platform Engineer | Cognitive Infrastructure Lead | Ecosystem Orchestration Strategist |

| Network Engineer | AI-Augmented Network Engineer | Autonomous Network Architect | Connectivity & Edge Intelligence Lead |

| Security Engineer | AI Security Analyst | Threat Intelligence Orchestrator | Adversarial Systems Architect |

| Data Analyst | AI Insights Analyst | Decision Intelligence Engineer | Strategic Intelligence Architect |

| Business Analyst | AI-Augmented BA | Outcome Design Strategist | Human-Org Intelligence Architect |

| System Administrator | AI-Managed Systems Operator | Autonomous Ops Supervisor | Physical-Digital Systems Manager |

| Technical Writer | AI Content Curator | Knowledge System Designer | Human-AI Communication Architect |

| Project Manager | AI Delivery Manager | Outcome Orchestrator | Strategic Initiative Architect |

Pattern Analysis

1. Execution → Orchestration. At every horizon, the role moves from “doing the work” to “governing systems that do the work.” A DevOps engineer who today writes Terraform moves to validating AI-generated infrastructure-as-code against compliance policies.

2. Domain Convergence. By 2035–2045, DevOps, Platform Engineering, and Security converge into shared “infrastructure intelligence” competencies. This mirrors the DevOps/SRE/Platform engineering convergence we’ve already seen, just accelerated.

3. Physical Persistence. Roles that intersect with hardware—Network Engineering, System Administration—retain physical maintenance components. The person who can configure an AI-managed network and swap a failed SFP module at 2 AM has a career that compounds.

7. The Maintenance Paradox

Here’s the irony most automation narratives miss: the more systems you automate, the more maintenance you create.

Anyone who’s operated Kubernetes in production understands this. Kubernetes “automates” container orchestration—and in return, you maintain etcd clusters, manage certificate rotation, debug CNI plugin conflicts, upgrade control planes, and troubleshoot OOMKilled pods at 3 AM. The automation didn’t eliminate operational work. It transformed it.

# The Maintenance Paradox: Automation creates maintenance

#

# Amazon: 750,000 robots → need technicians, floor managers, repair teams

# Amazon created 700 NEW job categories from robotics alone.

#

# AI Agents in Production: Every agent needs →

# - Monitoring & observability (drift detection, hallucination tracking)

# - Prompt maintenance (as models update, prompts degrade)

# - Retraining pipelines (data freshness, concept drift)

# - Exception handling workflows (human escalation paths)

#

# Same ops burden as Kubernetes, different substrate.For IT professionals, this creates a defensible career path—but with a critical caveat: the maintenance roles are fewer than the roles they replace. Amazon’s mechatronics apprenticeship has trained roughly 5,000 people since 2019. That cannot absorb 600,000 displaced workers.

8. Where Displaced Workers Go

The WEF projects 170 million new roles by 2030, but the fastest-growing jobs require expertise that doesn’t materialize from a bootcamp. Research indicates 77% of AI jobs require master’s degrees.

AI-Adjacent Upskilling: Prompt engineering, AI validation, model governance. A QA engineer pivots to AI output validation. A DevOps engineer pivots to AI agent monitoring.

Physical Maintenance Economy: Robotic fleet management, hardware lifecycle, edge deployment. Hands-on skills become career advantages.

Last-Mile Human Verification: The DevOps engineer validating AI-generated infrastructure against GxP compliance. The security engineer owning incident response decisions.

The Reskilling Gap: Reskilling takes 12–24 months. AI advances on 3–6 month cycles. The pipeline can’t keep pace.

Why Universities and Governments Are Structurally Behind

Curriculum refresh cycles: University degree programs update on 3–5 year cycles. AI capability leapfrogs every 6–12 months. A student entering a computer science program today graduates into a field that has been reshaped twice before they earn their diploma.

Credential lag: Certifications like the AWS Solutions Architect or Kubernetes CKA were designed around human-execution models. When AI generates Terraform configurations and Helm charts, the credential measures a skill the market no longer values in isolation.

Apprenticeship mismatch: Amazon’s mechatronics apprenticeship trains ~1,000 technicians per year. Their automation plans project 600,000 displaced future hires. The pipeline doesn’t match the displacement volume by two orders of magnitude.

Physical trades stigma: Despite low AI exposure (10–35%), enrollment in trade schools and apprenticeship programs hasn’t increased proportionally to demand. Plumbing, electrical, and HVAC certifications offer more long-term job security than many four-year tech degrees—yet cultural bias toward “knowledge work” persists.

9. What Would Have to Be False for This Thesis to Fail?

Strong arguments survive steelmanning. For the Great Inversion thesis to be wrong, one or more of the following conditions would need to hold:

Condition 1: LLM capability plateaus permanently. If large language models hit a hard capability ceiling and stop improving beyond current levels, task automation percentages would stabilize rather than compress toward 95/5. This is possible but inconsistent with observed scaling trends—GPT-4 to Claude 3 to Gemini Ultra each demonstrated capability gains. The plateau argument requires that all architectural advances (agents, multimodal, reasoning chains) simultaneously stall.

Condition 2: Regulation freezes AI deployment. If governments impose strict moratoria on AI in workplace automation (similar to GDPR’s impact on data processing), adoption curves flatten. The EU AI Act moves in this direction, but no major economy has restricted AI deployment in cognitive roles. Enterprise incentives (cost reduction, shareholder pressure) currently overwhelm regulatory friction.

Condition 3: Robotics leapfrogs cognition. If a robotics breakthrough enables physical automation to accelerate past cognitive automation, the “inversion” reverses to the traditional pattern. This requires solving Moravec’s Paradox—general-purpose physical dexterity in unstructured environments. Tesla’s robotaxi and Amazon’s Vulcan suggest this remains a frontier challenge, not an imminent breakthrough.

Condition 4: Labor cost advantages reverse. If human cognitive labor becomes cheaper than AI inference (e.g., due to wage deflation or inference cost increases), the economic incentive for automation disappears. Current trends move in the opposite direction: inference costs have dropped over 90% since 2023 while wages remain stable or increase.

10. Implications for Engineers and IT Professionals

Skill Decay vs. Skill Compounding

The central career risk is not replacement—it’s skill decay. When AI handles 80% of code generation, your coding fluency atrophies. When AI writes deployment manifests, your Kubernetes expertise erodes. Skills not exercised decay.

I’ve seen this in my own work managing 8+ production AKS clusters. The moment you stop doing kubectl debug by hand and rely entirely on AI diagnostics, your ability to reason about pod scheduling and resource contention degrades. The AI gives you an answer. But when it gives you the wrong answer at 3 AM, you need the expertise to catch it.

# Skills that DECAY with AI reliance:

# - Syntax fluency (any language)

# - Boilerplate configuration writing

# - Manual log parsing & repetitive test authoring

#

# Skills that COMPOUND with AI proliferation:

# - System-level failure analysis ("why did this cascade?")

# - Architecture under constraint ("GxP + cost + performance")

# - Stakeholder translation ("here's why migration takes 6 months")

# - Security threat modeling ("what attack surface does this AI create?")

# - Physical infrastructure judgment ("this rack layout fails cooling")The Career Path Fork

Path A → Strategic Orchestration (Up): Governing AI systems, designing architectures, managing risk. The Stratosphere. (As shown in Figure 1.)

Path B → Physical Systems (Out): Maintaining infrastructure, hardware fleets, edge deployment. The Ground Layer.

Both viable. Neither optional. Staying in the middle—routine cognitive work—is standing on a melting glacier.

11. Conclusion: The Journey, the Destination, and What to Do Monday Morning

The Great Inversion unfolds in three phases.

Phase 1 — The Compression (2025–2030): Routine cognitive work is aggressively automated. Entry-level roles contract sharply. The 80/20 split becomes default. Companies restructure with 30–50% fewer knowledge workers.

Phase 2 — The Bifurcation (2030–2035): The workforce splits into Stratosphere and Ground Layer. The middle hollows out. Job titles stabilize around orchestration and maintenance paradigms.

Phase 3 — The Integration (2035–2045): Physical robotics matures. The Ground Layer compresses. Remaining human roles concentrate on novel problem-solving, ethical governance, and the truly unpredictable.

The question that should keep every engineer awake at night is not “Will AI take my job?” It is this: If 75–85% of cognitive task content can be automated today, and 95% within a decade, what is the 5% that justifies human presence—and are you investing in becoming indispensable at it?

Your Monday Morning Action Checklist

🛑 STOP Doing

- Writing boilerplate code, configs, or docs by hand

- Running manual test suites you could automate

- Treating AI tools as optional “nice-to-have”

- Assuming your current skill set has a 10-year shelf life

- Conflating “busy” with “valuable”

✅ DOUBLE DOWN On

- Architecture & system design (the “why” behind decisions)

- Cross-team communication & stakeholder translation

- Compliance judgment (GxP, SOC2, HIPAA, GDPR)

- Incident response leadership under pressure

- Building relationships that AI cannot replicate

📚 LEARN Next

- Physically: Hardware troubleshooting, DC operations, edge deployment

- Architecturally: AI agent orchestration, model governance, drift detection

- Strategically: Cost modeling, risk quantification, business case writing

- Adversarially: Threat modeling, red team thinking, security architecture

⚡ DELEGATE to AI Immediately

- First-draft code, configs, IaC templates

- PR descriptions, commit messages, runbook drafts

- Log analysis, alert correlation, CVE triage

- Meeting summaries, status reports, documentation updates

- Test case generation, regression scripting

That question has no comfortable answer. But the engineers who confront it honestly will navigate the Inversion. The rest will discover, too late, that the glacier they were standing on has already melted.

Glossary

Agentic AI — AI systems that autonomously decompose complex tasks into subtasks, execute them sequentially or in parallel, verify outputs, and iterate without continuous human prompting. The cognitive equivalent of a CI/CD pipeline.

Cognitive Screen Work — Tasks performed entirely through digital interfaces: coding, analysis, report writing, configuration, communication. The native medium of large language models and the primary target of generative AI automation.

Moravec’s Paradox — The observation (Hans Moravec, 1988) that tasks easy for humans (walking, grasping, perceiving) are extremely hard for robots, while tasks hard for humans (chess, calculation, pattern-matching) are easy for computers. Explains why physical labor resists automation while cognitive labor falls first.

Task Compression — The progressive reduction of human-required task content within a role as AI absorbs routine work. Measured as AI-amenable percentage vs. human-required percentage (e.g., 80/20 compressing to 95/5).

Task Exposure — The percentage of discrete tasks within a role that are amenable to AI execution, as measured by occupation-level analysis frameworks (Bloomberg, OpenAI/UPenn, OECD). Distinct from job elimination: high task exposure indicates role transformation, not necessarily role deletion.

Stratosphere — The top layer (~10%) of the polarized workforce: AI architects, system orchestrators, decision makers, ethics governance. High abstraction, high compensation, small headcount.

Ground Layer — The base layer (~75%) of the polarized workforce: physical maintenance, hardware repair, robotic fleet management, edge deployment. Moderate compensation, high tactile skill, largest headcount.

The Hollow Middle — The evacuated center of the workforce distribution where routine cognitive work once resided. Previously the largest employment segment, now compressed by AI into the Stratosphere and Ground Layer. The bell curve becomes a barbell.

Appendix: Data Sources, Methodology, and References

Source Notes and Methodological Clarifications

| Claim | Source | Year | Type | Notes |

|---|---|---|---|---|

| “75–85% of cognitive tasks AI-amenable” | Bloomberg Research / OpenAI-UPenn | 2023–2025 | Empirical (measured) | Based on occupation task-level analysis; “amenable” means AI can perform the task, not that it has replaced it everywhere |

| “92M jobs displaced by 2030” | WEF Future of Jobs Report 2025 | 2025 | Projection (modeled) | Net effect with 170M job creation (+78M net); survey of 1,000+ employers |

| “300M jobs globally exposed” | Goldman Sachs | 2023–2025 | Projection (modeled) | Exposure ≠ elimination; describes roles with significant task overlap |

| “54,694 AI-attributed layoffs (U.S.)” | Challenger, Gray & Christmas | 2025 | Empirical (tracked) | Employer-disclosed, AI-cited layoff announcements |

| “Support team 9K → 5K” | Salesforce / Marc Benioff | Sep 2025 | Empirical (disclosed) | Logan Bartlett Show podcast; corroborated by earnings data |

| “750K+ robots; 600K future hires eliminated” | Amazon / NYT | Oct 2025 | Internal strategy docs | Obtained by NYT; 600K refers to hires avoided by 2033, not current layoffs |

| “15,000 roles eliminated” | Microsoft / Nadella | 2025 | Empirical (disclosed) | Multiple rounds; 9,000 in July alone |

| “13% drop in entry-level hiring” | Stanford Digital Economy Lab | 2025 | Empirical (measured) | Based on ADP employment data for AI-exposed occupations |

| “AI exposure exactly opposite prior automation” | Brookings Institution | 2024–2025 | Empirical (analysis) | Generative AI & the American Worker research series |

| “Robotaxi ~135 vehicles” | Tesla / industry reports | Dec 2025 | Empirical (reported) | Austin launch June 2025 with safety monitors; NHTSA investigation opened |

| “30% of hours worked automatable by 2030” | McKinsey Global Institute | 2023–2025 | Projection (modeled) | Based on task-level analysis across occupations |

| “53% market analyst tasks automatable” | Bloomberg Research | 2025 | Empirical (measured) | Task Automation Analysis; role-specific decomposition |

Note: All statistics are from publicly available reports, earnings calls, podcast transcripts, and journalistic investigations. Projections are identified as such throughout. No statistics fabricated.

© 2026 OpsCart.com | Shamsher Khan | All rights reserved.